APPLICATION

The whole project will be divided to several python modules for different comparison between serial programming, CUDA GPU implementation, MPI implementation and MPI+CUDA implementation.

First, you can login to Resonance compute node, and make sure you have loaded the environment. (It should be automatically loaded for you.)

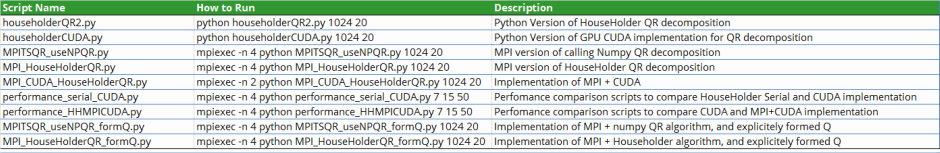

You can run different files as listed below.

First, you can login to Resonance compute node, and make sure you have loaded the environment. (It should be automatically loaded for you.)

You can run different files as listed below.

Details

· householderQR2.py

This script has implementation on householder algorithm for QR factorization.

This could be running as: python householderQR2.py 1024 20 , where 1024 and 20 means the matrix is 1024 * 20

· householderCUDA.py

This is the implementation of GPU CUDA on householder algorithm. We have used some of the CUBLAS library here to help on matrix multiplication.

It could be running as: python householderCUDA.py 1024 20

· MPITSQR_useNPQR.py

This is to use MPI to implement TSQR algorithm, and within each TSQR block, it calls numpy QR build-in function.

It could be running as: mpiexec -n 4 python MPITSQR_useNPQR.py 1024 20

· MPI_HouseHolderQR.py

MPI version of HouseHolder QR decomposition, the householder QR algorithm is the one implemented in householderQR2.py

It could be running as: mpiexec -n 4 python MPI_HouseHolderQR.py 1024 20

· MPI_CUDA_HouseHolderQR.py

Implementation of MPI + CUDA on HouseHolder algorithm. It uses householderCUDA.py for the CUDA part.

It could be running as: mpiexec -n 2 python MPI_CUDA_HouseHolderQR.py 1024 20

· performance_serial_CUDA.py

perfomance comparison scripts to compare HouseHolder Serial and CUDA implementation

It could be running as: mpiexec -n 4 python performance_serial_CUDA.py 7 15 50. Here, 7 actually means it starts with rows 2^7 and finish with rows 2^15

· performance_HHMPICUDA.py

perfomance comparison scripts to compare CUDA and MPI+CUDA implementation

It could be running as: mpiexec -n 4 python performance_HHMPICUDA.py 7 15 50. Here, 7 actually means it starts with rows 2^7 and finish with rows 2^15

· MPITSQR_useNPQR_formQ.py

Implementation of MPI + numpy QR algorithm, and explicitly formed Q

In previous version, we did not explicitly form the Q part, rather just calculated R. In this script we have implemented the Q calculation.

It could be running as: mpiexec -n 4 python MPITSQR_useNPQR_formQ.py 1024 20

· MPI_HouseHolderQR_formQ.py

Implementation of MPI + Householder algorithm, and explicitely formed Q

It could be running as: mpiexec -n 4 python MPI_HouseHolderQR_formQ.py 1024 20

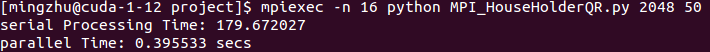

The application runs correctly as shown below. This is the expected result

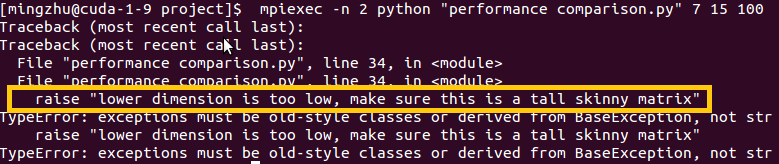

It will throw out error message if we are trying to do a non-tall skinny matrix, as our algorithm is designed purely for tall skinny matrix.

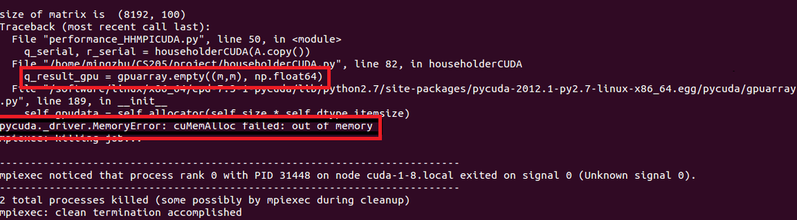

Unfortunately, a matrix with size 8192 * 100 will not be able to be processed due to the memory limit of GPU C2070.

CS 205 Final Project | Fall 2013 | Xueshan Zhang and Ming Zhu