DESIGN

We divided the implementation into 4 stages.

1. We tried to rewrite the householder QR algorithm in python. This could then serve as a benchmark for us to compare the performance.

2. As we saw, some heavy computational work exist in stage 1, we decided to use GPU to accelerate the performance. We used the CUBLAS library to implement matrix calculation involving matrix norm and vector matrix multiplication.

3. As this project is about TSQR ( Tall skinny QR), we have an algorithm to implement TSQR through reduction, and it is a perfect fit for MPI implementation. Therefore we decided to implement TSQR algorithm in MPI. In each TSQR part, we can choose to use numpy QR factorization or our own householder QR factorization.

4. As GPU QR factorization is faster than our own householder QR factorization, naturally we would like to combine these implementations. Therefore we have a final version of MPI+GPU to calculate the QR. However, we only have 2 GPU on the cluster, therefore our MPI processes could only be 2. Still, you can see the performance enhancement in our result analysis part.

Details on Stage 1:

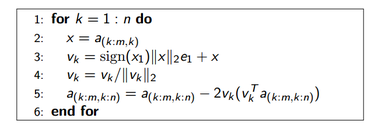

This is the standard QR factorization on householder algorithm. A Householder reflection (or Householder transformation) is a transformation that takes a vector and reflects it about a hyperplane. We can use this operation to calculate the QR factorization of an m-by-n matrix A with m >= n.

The standard algorithm could be found easily online. Here is one example for pseudo code.

The standard algorithm could be found easily online. Here is one example for pseudo code.

Details on Stage 2:

As householder QR in serial will have a lot of floating point multiplication. We can use GPU to send the matrix over to different processes and calculate the matrix dot products, matrix vector products, matrix norm. We used the cublas library to do this, as this is the product provided by scikits cuda package. Algorithm is the same as householder implementation at serial version. Here everything is using float64 to have more precision; of course, this will also have the performance impact.

Details on Stage 3:

MPI will first generate a matrix, on rank 0, it will then perform serial QR factorization, which will be considered as serial time. Then rank 0 will send the corresponding part of the data to other ranks. And on each rank, it could call the numpy version of QR to have the Q and R return. Then MPI will use reduction to form a new Q and a new R iteratively.

A difficult part of implementing this algorithm is to form the neighbourList. As each time MPI did the reduction, on the next iteration, we are not sending and receiving from all the MPI nodes. Rather, we just receive and send from certain number of nodes, and each time that list will be cut to half.

Therefore, I implemented two lists to handle such scenario. First one is tracking which process/rank should receive the data, and the second one tracks which one needs to send the data over. And each iteration we can update the two lists to match the reduction sequence.

Also, implementation of forming Q is relatively slow. As we will need to do matrix multiplication on each of the iteration, and save the Q matrix in a certain format. Therefore, the Q forming part takes the most of the time. As individual part of QR is done on different processes, the Q will need to be sent back to rank 0 after each iteration. That takes a large amount of time on the communication too. Therefore, we have two versions, one with MPI to form Q and R, one is only to form a R. As illustrated in the Motivation part, a single R is enough if we aim to get SVD via QR. We can use these functions depends on the needs.

A difficult part of implementing this algorithm is to form the neighbourList. As each time MPI did the reduction, on the next iteration, we are not sending and receiving from all the MPI nodes. Rather, we just receive and send from certain number of nodes, and each time that list will be cut to half.

Therefore, I implemented two lists to handle such scenario. First one is tracking which process/rank should receive the data, and the second one tracks which one needs to send the data over. And each iteration we can update the two lists to match the reduction sequence.

Also, implementation of forming Q is relatively slow. As we will need to do matrix multiplication on each of the iteration, and save the Q matrix in a certain format. Therefore, the Q forming part takes the most of the time. As individual part of QR is done on different processes, the Q will need to be sent back to rank 0 after each iteration. That takes a large amount of time on the communication too. Therefore, we have two versions, one with MPI to form Q and R, one is only to form a R. As illustrated in the Motivation part, a single R is enough if we aim to get SVD via QR. We can use these functions depends on the needs.

Details on Stage 4:

This stage is relatively easy once we have all the building blocks on previous sessions. We simply just changed in MPI implementation on how to calculate the QR. Instead of using serial version of HouseHolder, we changed it to parallel GPU version QR factorization. Indeed, this provides an impressive speedup.

CS 205 Final Project | Fall 2013 | Xueshan Zhang and Ming Zhu